Tutorials

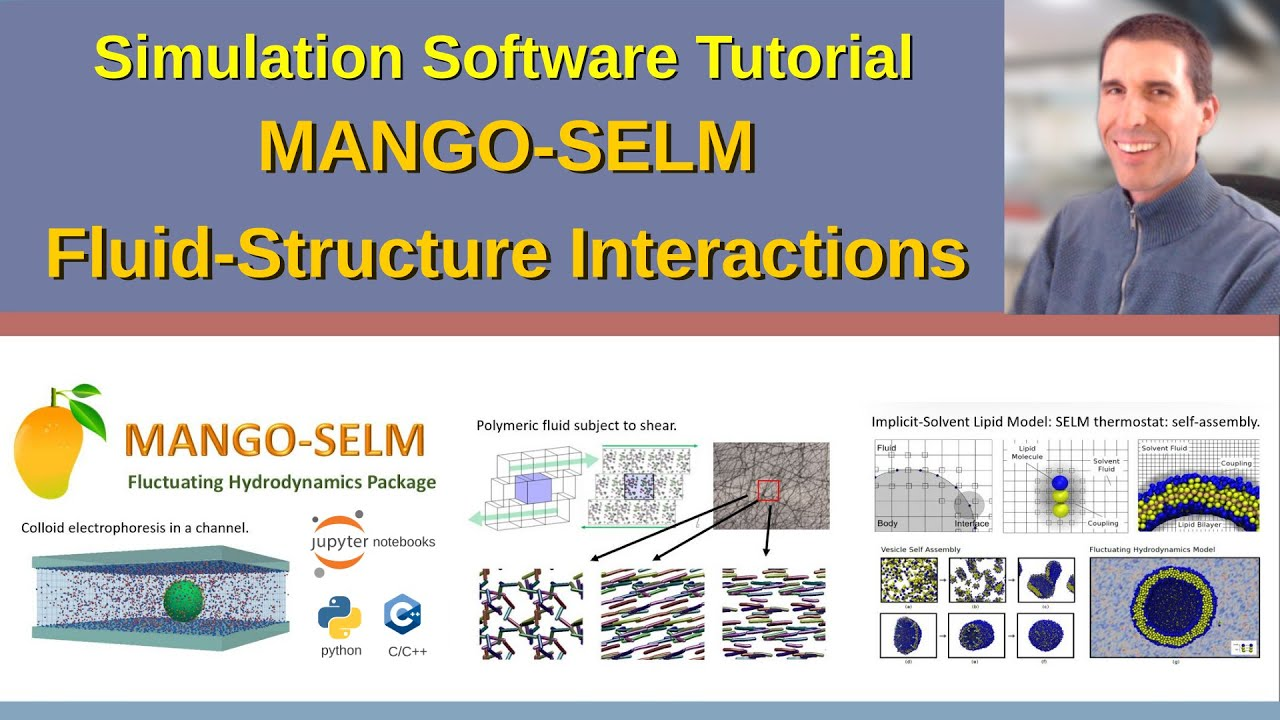

Setting up models and simulations:

- Jupyter Notebook interface and Python (Polymer Example)

- Additional examples on GitHub: mango-selm

- Scripting to setup simulations (FENE-Dimer Example).

Tutorial: How to Setup Models and Simulations using Jupyter Notebooks and Python.

Interactive Session.

Slides: [PDF]

(prior versions)

For additional information see the documentation.