[Back to Scharlemann vita]  |

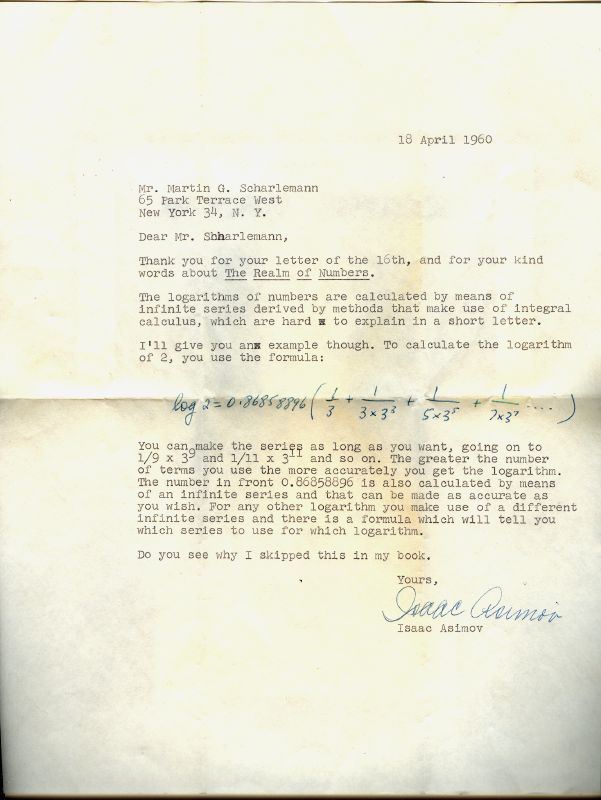

The formula Isaac Asimov gives here can be derived as follows:

Begin with the observation that \(2 = 10^{\log 2} = (e^{\ln 10})^{\log 2} = e^{\ln 10 \cdot \log 2}.\)

Hence \(\ln 2 = \ln 10 \cdot \log 2\) or \( \log 2 = \frac{\ln 2}{\ln 10}.\)

So if we can calculate the natural logarithm (here denote ln) of a number, we can calculate its logarithm with base 10 (here denoted log).

One sequence for natural logarithm can be derived via this calculation: \[ \ln \frac{1+x}{1-x} = \ln (1 + x) - \ln (1 - x) = \int_0^x \frac1{1+t} \; dt + \int_0^x \frac1{1-t} \; dt = \] \[ \int_0^x (1 - t + t^2 - t^3 ...) dt + \int_0^x (1 + t + t^2 + t^3 ...) dt = 2(x + \frac{x^3}3 + \frac{x^5}5 + ...)\]

Make the substitution \[ y = \frac{1+x}{1-x} \implies x = \frac{y - 1}{y + 1}\] to get the formula \[ \ln y = 2[(\frac{y - 1}{y + 1}) + \frac13(\frac{y - 1}{y + 1})^3 + \frac15(\frac{y - 1}{y + 1})^5 + ...]\]

Substitute \(y = 2\) and this becomes \[ \ln 2 = 2[(\frac13) + \frac13(\frac13)^3 + \frac15(\frac13)^5 + ...]\]

If instead you do the calculation (using this or some other method) for \(\ln 10\) you get \[ \frac1{\ln 10} = 0.43429448...\] so, as Asimov states, \[ \log 2 = \frac{\ln 2}{\ln 10} = 2(0.43429448...)[(\frac13) + \frac13(\frac13)^3 + \frac15(\frac13)^5 + ...] = \] \[ (0.86858896...)[(\frac13) + \frac13(\frac13)^3 + \frac15(\frac13)^5 + ...].\]

Of course Asimov was a busy man and he didn't work this out just for an 11 year old fan; it was apparently a standard formula in many algebra books of the time, as I learned by Googling the number .86858896

Why did I ask Asimov why he had not provided a method to compute logarithms? Because I had just learned an easy method that combined several interesting topics that were in his book. Read on below... |

About a year before I wrote to Isaac Asimov, well before I had read his book, a 10-year-old friend of mine down the street (later to become ace algorithmist and UC Irvine computer scientist Professor George Lueker) introduced the kids in the neighborhood to logarithms, and showed us a very cool way to calculate them. When I later read Asimov's book, I was surprised that such an intriguing method of calculation didn't make it into Asimov's beautiful book, and was even more surprised that, in this reply, Asimov gave a much more complicated and opaque method for finding logarithms. On the other hand, I have still never seen George's method described anywhere else; it's quite possible that he discovered it himself. So I herewith commit it to writing, perhaps for the first time, and call it

Lueker's First Algorithm

Suppose you want to find log10a. We might as well assume 1 < a < 10 because you can shift the decimal point on a right or left by just adding or subtracting the number of such shifts from the logarithm.

Step 0: Write down log10a = 0.?

Step 1: Ask the question: is a2 > 10?

If the answer is "yes", move the question mark to the right and replace it with a 1 to get log10a = 0.1?. Then define a1 = a2/10

If the answer is "no", move the question mark to the right and replace it with a 0, to get log10a = 0.0?. Then define a1 = a2.

Step 2: Ask the question: is a12 > 10?

If the answer is "yes", move the question mark to the right and replace it with a 1. Then define a2 = a12/10

If the answer is "no", move the question mark to the right and replace it with a 0. Then define a2 = a12.

.

.

.

Step r: Ask the question: is ar-12 > 10?

If the answer is "yes", move the question mark to the right and replace it with a 1. Then define ar = ar-12/10

If the answer is "no", move the question mark to the right and replace it with a 0. Then define ar = ar-12.

Stop the algorithm if ever ar = 10. The result is a potentially infinite string of 0's and 1's, e. g. log10a = 0.100101001...

When interpreted in binary form (i. e. base 2, not decimal) this number represents log10a.

Example: log103

For a = 3, the above algorithm gives:

log103 = 0.0? and a1 = 9

log103 = 0.01? and a2 = 8.1

log103 = 0.011? and a3 = 6.561

log103 = 0.0111? and a4 = 4.305

log103 = 0.01111? and a5 = 1.853

log103 = 0.011110? and a6 = 3.434

log103 = 0.0111101? and a7 = 1.179

etc.

Interpreted as a binary expansion,

.0111101 = 1/4 + 1/8 + 1/16 + 1/32+ 1/128 = 61/128 = 0.4765625

whereas

log103 ~ 0.477121255...

Not a bad approximation for a 10 year old!

When contacted recently, Prof. Lueker had a slightly different take: he doubts that it was he who first told the neighborhood kids about logarithms, and thinks it's unlikely that he was the first person ever to discover this algorithm, which he then saw as an analogue of long division. Looking back on it now he notes:

One simple way of viewing it is that one can estimate \(\log_{10} x\) by picking a large n and computing \([\log_{10} x^n]/n\). In the algorithm n is a power of 2, \(x^n\) is computed by repeated squaring, and the floor of the log is computed as the number of factors of 10 that can be removed without going below 1; factors of 10 are pulled out as they are found, thus keeping the numbers a reasonable size.