Description

Welcome to the class website for Machine Learning: Special Topics . This graduate seminar will cover select topics in machine learning concerning mathematical foundations and practical aspects. The seminar will discuss diverse applications arising in recent data-driven fields, the natural sciences, and engineering. The class will also cover select advanced topics on deep learning and dimension reduction. Please check back for updates as new information may be posted periodically on the course website.

We plan to use the books

- The Elements of Statistical Learning: Data Mining, Inference, and Prediction, by Hastie, Tibshirani, Friedman.

- Foundations of Machine Learning, by Mehryar Mohri, Afshin Rostamizadeh, and Ameet Talwalkar.

The course will also be based on recent papers from the literature and special lecture materials prepared by the instructor. Topics may be adjusted based on the backgrounds and interests of the class.

Syllabus [PDF]

Topics

- Background for Machine Learning / Data Science

- Introduction discussing historic developments and recent motivations

- Concentration Inequalities and Sample Complexity Bounds

- Statistical Learning Theory, PAC-Learnability, related theorems

- Rademacher Complexity, Vapnik�Chervonenkis Dimension

- No-Free-Lunch Theorems

- High Dimensional Probability and Statistics

- Optimization theory and practice

- Motivating applications

- Supervised learning

- Linear methods for regression and classification

- Model selection and bias-variance trade-offs

- Support vector machines

- Kernel methods

- Parametric vs non-parametric regression

- Graphical models

- Neural network methods

- Unsupervised learning

- Clustering methods

- Principle component analysis and related methods

- Manifold learning

- Neural network methods

- Additional topics

- Stochastic gradient descent

- First-order non-linear optimization methods

- Markov-Chain Monte-Carlo (MCMC) sampling for posterior distributions

- Sampling with ito stochastic processes

- Variational inference

- Iterative methods and preconditioning

- Dimensionality reduction

- Sparse matrix methods

- Stochastic averaging and multiscale methods

- Example applications

Prerequisites:

Linear Algebra, Probability, and ideally some experience programming.

Supplemental Materials:

- Python 3.7 Documentation.

- Numpy Tutorial

- Anaconda Tutorial

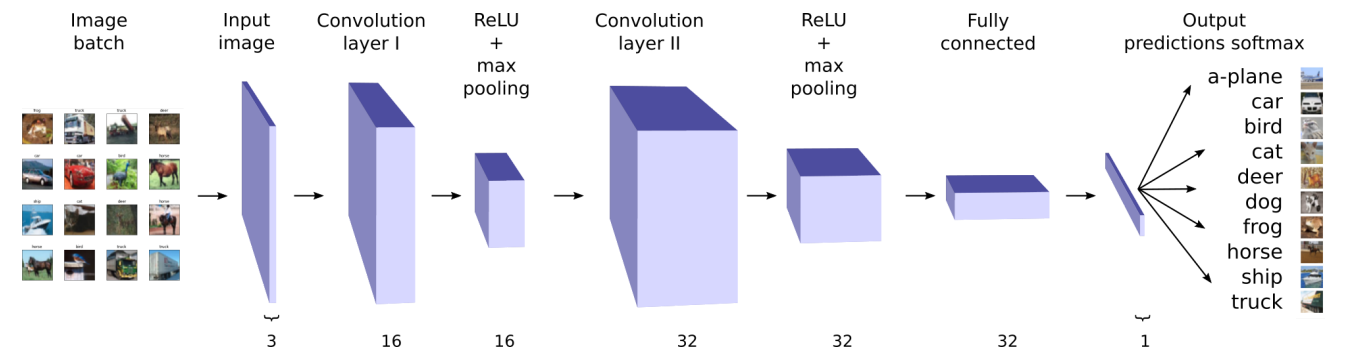

- Image Classification using Convolutional Neural Networks

- [Additional Course Notes and Resources Page]

Additional Materials

- You can find additional materials on my related data science course websites